Waymo Operator Overrode Safety Stop, Sent Robotaxi Past School Bus as UK Bets $103M on Industrial Autonomy

A remote Waymo operator told a stopped robotaxi to illegally pass an Austin school bus in January, exposing the 'human-in-the-loop' failure that federal investigators are now probing. Meanwhile, UK-based Oxa raised $103 million to deploy autonomous vehicles in ports and factories — not public roads — betting that controlled environments will prove autonomy before city streets do.

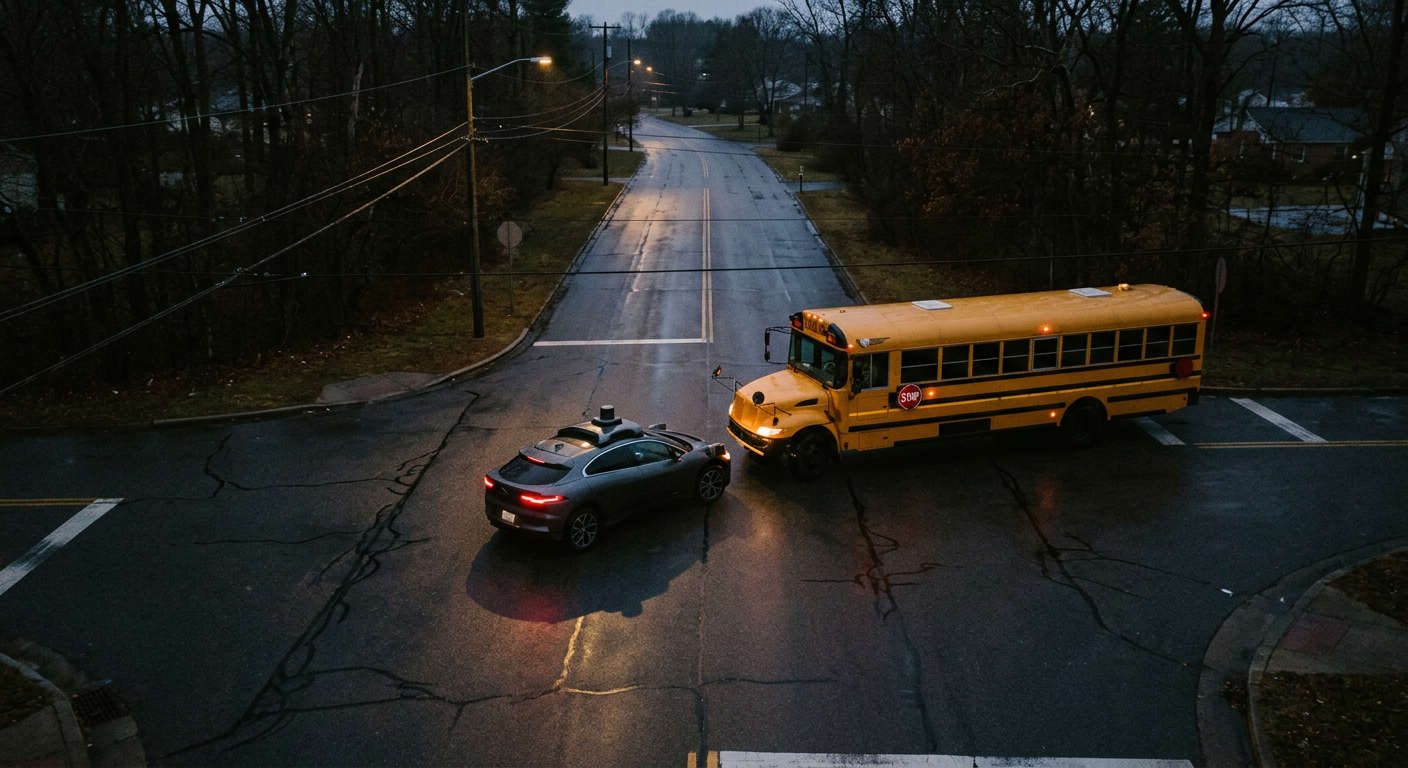

On the morning of January 12, 2026, a Waymo robotaxi in Austin did exactly what it was programmed to do: it stopped in front of a school bus loading children and asked for help. The autonomous system flagged the situation as ambiguous and pinged its remote safety operator with a question: "Is this a school bus with active signals?" The human operator, monitoring the vehicle from somewhere outside Austin, responded "No." The robotaxi proceeded to illegally pass the bus, according to a federal investigation now underway by the National Transportation Safety Board.

The incident, reported by The Robot Report, reveals a critical vulnerability in the autonomous vehicle industry's safety architecture. Waymo's vehicle performed its safety stop correctly — the technology worked. But the remote human operator, meant to serve as a failsafe for edge cases, made a decision that violated Texas Transportation Code Section 545.066, which requires all vehicles to remain stopped until a school bus deactivates its signals. The Waymo vehicle was first in line, and after it moved, five other human-driven cars followed suit, compounding the risk to children.

This wasn't an isolated lapse. In September 2025, WXIA-TV in Atlanta aired footage of another Waymo robotaxi illegally passing a stopped school bus. The NTSB is now investigating multiple incidents involving Austin Independent School District buses, seeking to determine probable cause and issue safety recommendations. The probe arrives at an awkward moment for the autonomous vehicle industry, which has spent years positioning its technology as inherently safer than human drivers. Waymo itself reports fewer serious crashes than traditional vehicles, and the National Highway Traffic Safety Administration acknowledges that driver assistance systems can help anticipate road hazards. But the Austin incident exposes a different problem: geographical and situational disconnect between remote operators and the vehicles they're supposed to supervise.

Stephen Burg, a Denver accident attorney with Burg Simpson, told The Denver Post that while he's "excited about the advancement in technology," autonomous vehicles "need to be properly regulated so that we're not passing the buck onto the taxpayers." His firm is already handling cases involving Tesla's Autopilot technology, including a landmark 2025 verdict that found the company partially responsible for a fatal collision. Burg's concern is that as autonomous vehicle lobbyists pour millions into shaping legal frameworks — some seeking immunity for AI-powered vehicles — everyday people could be left without recourse after crashes. "There are people who lose everything, and it's no fault of their own," he said, warning that victims sometimes end up on public benefits due to medical bills or job loss after collisions with autonomous vehicles.

The liability questions are thorny. In traditional car accidents, responsibility is typically divided between two drivers and, occasionally, a vehicle manufacturer. Autonomous technology introduces a third party: the software company and its remote operators. When a robotaxi fails to stop for a school bus, is the manufacturer liable? The remote operator? The passenger, who had no control? Burg argues that without clear accountability, insurance companies and manufacturers could dodge responsibility, leaving victims to shoulder the financial burden. "If you're taking on an auto manufacturer and saying that their vehicle is defective, you're basically saying their engineers and their technology is wrong," he noted. "We have to prove otherwise."

While Waymo grapples with public road incidents, a UK startup is taking a different approach entirely. Oxa, an Oxford-based autonomous vehicle software company, announced it has raised $103 million in the first close of its Series D round, according to The Next Web. The round includes a $50 million commitment from the UK National Wealth Fund — a signal of strategic government interest in domestic robotics — alongside investments from NVentures (NVIDIA's venture arm), IP Group, Hostplus, and bp Ventures. Oxa's bet is that autonomy will scale first in logistics yards, not city streets.

Oxa doesn't manufacture vehicles. Instead, it retrofits existing industrial fleets — tow tractors, logistics shuttles — with its software stack, which includes Oxa Driver (the autonomy software), Oxa Foundry (a development toolkit), and Oxa Hub (fleet management). The company targets controlled environments like ports, airports, factories, and solar farms, where autonomous driving faces fewer regulatory barriers and operates in closed loops rather than unpredictable urban traffic. Customers include DHL, Vantec, and bp. The new capital will fund commercial deployments in Europe, the UK, and the Middle East, focusing on repetitive tasks like transporting goods across factory yards or monitoring large energy facilities.

The contrast between Oxa's industrial focus and Waymo's public road ambitions is stark. Waymo, backed by Alphabet, launched in Denver in fall 2025 with support from Governor Jared Polis and Mayor Mike Johnston, according to CBS News. The company developed technology to handle harsh winter conditions — its first test outside milder climates. But operating in cities means navigating school buses, pedestrians, construction zones, and the infinite variability of human behavior. Oxa's thesis is simpler: autonomy will prove itself in predictable environments before it conquers the chaos of public roads. It's a less spectacular vision than the robotaxi dreams that once dominated headlines, but potentially far more deployable in the near term.

The $103 million Oxa raised represents only the first close of its Series D, with additional capital expected later. The company has not disclosed a valuation or the exact breakdown of investments by participant, but the UK National Wealth Fund's $50 million stake suggests policymakers see industrial autonomy as a strategic priority. If Oxa's approach succeeds, it could offer a roadmap for scaling autonomous technology without the legal and safety complexities that Waymo and others face on public roads.

For now, the Austin school bus incident serves as a reminder that even when autonomous systems perform correctly, the humans in the loop can still fail. The robotaxi stopped and asked for guidance. The remote operator, likely monitoring multiple vehicles from a control room far from the scene, made a split-second call that put children at risk. As the NTSB investigates and lawmakers debate liability frameworks, the question isn't just whether autonomous vehicles are safer than human drivers. It's whether the industry's safety architecture — remote operators, software failsafes, regulatory patchwork — is robust enough to handle the messy reality of roads shared with school buses, distracted drivers, and unpredictable human behavior. Oxa's industrial bet suggests one answer: start where the stakes are lower and the environment is controlled. Waymo's Austin incident suggests another: the technology may be ready, but the humans supervising it are not.